Blog post

Axing the leap second is not science — it is a defeat against it

By Marco W. Soijer

By Marco W. Soijer

Clock synchronisation is fascinating. Simple as it may seem, trying to keep two clocks in sync is more of a problem than one may think, unless you do not care about the transmission times when exchanging messages. But for any high-accuracy timing problem, transmission times are relevant.

The question which time to sync to however, always seemed more obvious: UTC, of course. Co-ordinated universal time shares the advantages of international atomic time (TAI) in being perfectly stable and constant, while always staying within one (actually 0.9) second of universal time (UT) and thus reflecting 'true' time on Earth, depending on our planet's orientation towards the Sun.

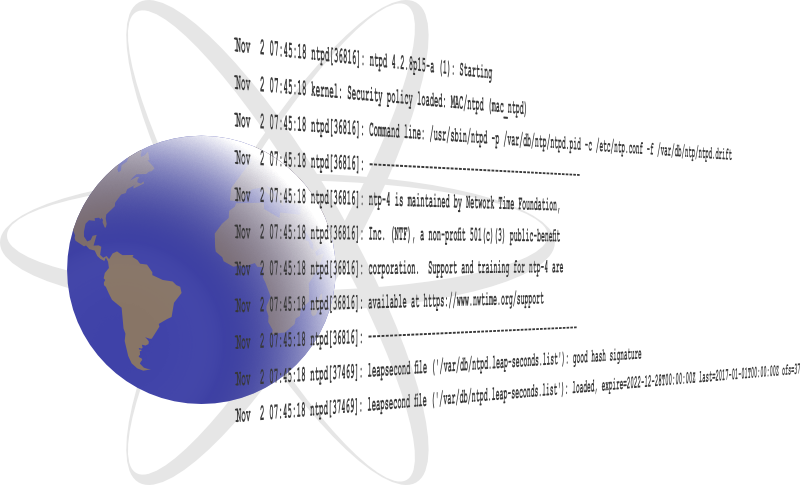

To keep UTC in sync with UT despite the Earth's unstable rotation, leap seconds are introduced every now and then. We accumulated 37 of them in the past five decades. It basically means that typically on 31 December, after 23:59:59 comes 23:59:60, and only one second later 0:00:00. No big deal for grandma's pendulum clock, but apparently a big problem for computers. And therefore the community of telecommunications engineers has been debating this for years, and they came up with a decision this week: as of 2035, no more leap seconds will be introduced. What they have not thought of, is a solution for the underlying problem. "Science" — whoever that may be — is to come up with a solution over the next decades. If things get too bad, we can alway insert a leap minute or even an hour. Sounds like a great plan — the best part of it is probably the fact that the people who came up with it, will be dead by the time such a leap minute needs to be introduced.

The elephant in the room is the Unix time stamp

Computers depend on accurate time and they cannot cope with a leap second. That is acceptable and has been known as long as computers exist. So let them internally use TAI — or the closely related GPS time — which is stable, monotonic and does not use leap seconds. It is the same everywhere around the world, so it is also very practical. When representing a time to a human user, simply add the current leap second shift, plus any adaptation for local time zones or daylight saving time. So why did the leap second need to go?

There seems to be only one reason. On Unix systems — which make up for basically all of the IT infrastructure nowadays — there is the famous time stamp: a 86400-seconds-per-day counter that started in 1970 and always reaches a round 86400-multiple at midnight. It is the basis for all time calculations on all systems. Local time zone offsets are multiples of 3600 for each hour. The time stamp is linked to UTC, so it is subject to leap seconds. The problem with IT and leap seconds is therefore not the leap second itself — it is the way they are implemented on Unix systems, being included into the underlying reference time and not simply being added when the time is being represented in human-readable form, together with local time zone information.

Now this could all be a nice anecdote and something we should live with for historical and compatibility reasons, if the Unix time stamp would have been a concept that is proven, practical, and — most importantly — sustainable. But the time stamp is a signed, 32-bit integer. This means that it will roll over on 19 January 2038. We could re-define it into an unsigned integer, discontinue support for dates before 1970, and use the time stamp through 2106, but this only delays the issue to the next generation. This is the actual elephant in the room when it comes to clock synchronisation on computer systems. We are ignoring the drift between solar and atomic time and denying the existence of a solution, just to protect an obsolete and incorrect time counter.

We should have introduced a new, global and monotonic time reference for Unix systems and a clear protocol on how to add leap seconds as a part of local time zone offsets instead — something that does not seems to be very complicated. Ignoring the need for leap seconds while also ignoring the upcoming year-2038 problem, and leaving them for the next generation to resolve, marks the defeat of telecommunications engineering against true science. At least they have been honest about it. Clock synchronisation will get more and more fascinating towards the end of the next decade.